Why you should use nopaque

nopaque is a custom-built web application for researchers who want to get out more of their images and texts without having to bother about the technical side of things. You can focus on what really interests you, nopaque does the rest.

Speeds up your work

All tools provided by nopaque are carefully selected to provide a complete tool suite without being held up by compatibility issues.

Cloud infrastructure

All computational work is processed within nopaque’s cloud infrastructure. You don't need to install any software. Great, right?

User friendly

You can start right away without having to read mile-long manuals. All services come with default settings that make it easy for you to just get going. Also great, right?

Meshing processes

No matter where you step in, nopaque facilitates and accompanies your research. Its workflow perfectly ties in with your research process.

What nopaque can do for you

All services and processes are logically linked and built upon each other. You can follow them step by step or directly choose the one that suits your needs best. And while the process is computed in nopaque’s cloud, you can just keep working.

File setup

Digital copies of text based research data (books, letters, etc.) often comprise various files and formats. nopaque converts and merges those files to facilitate further processing and the application of other services.

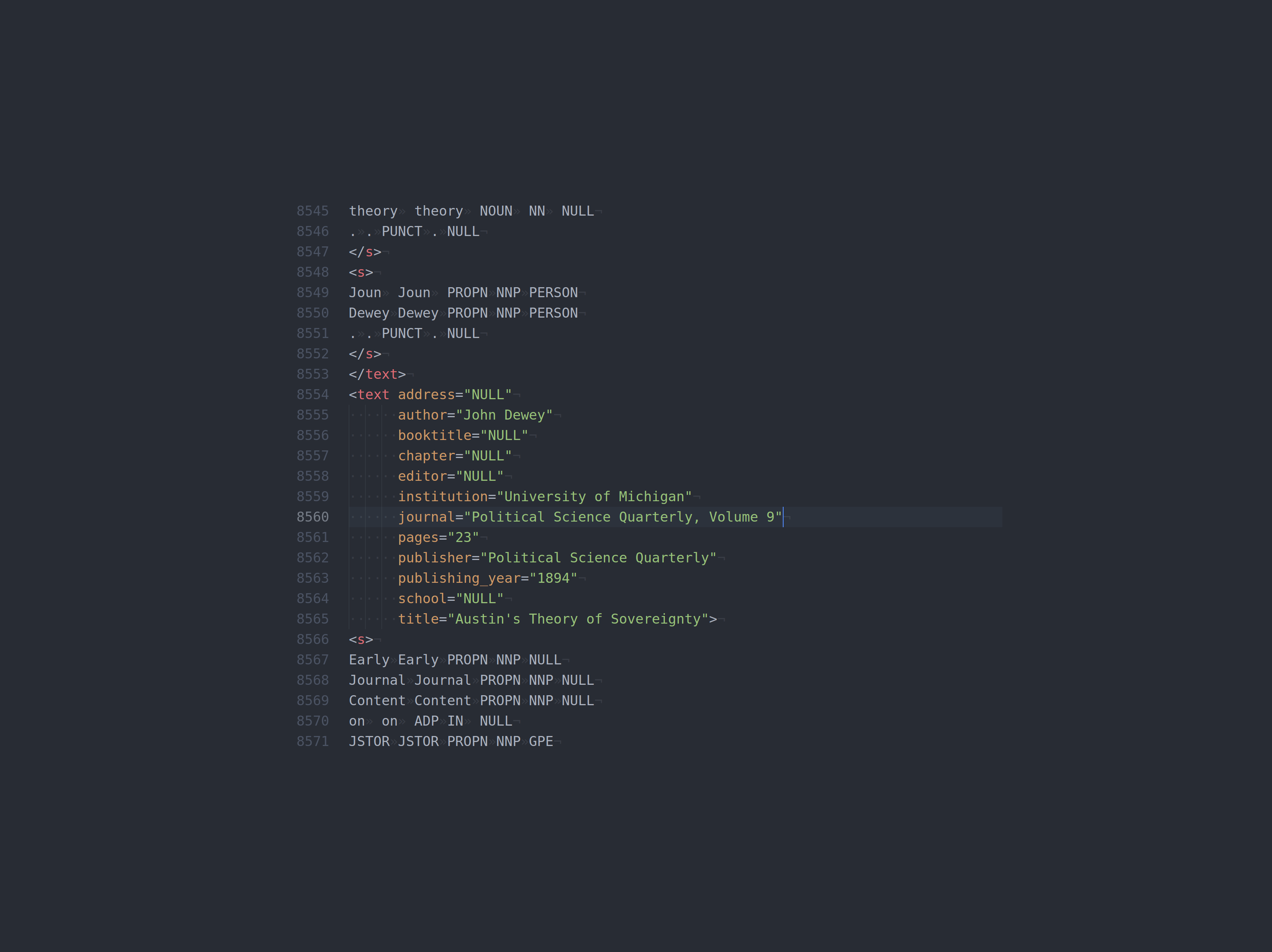

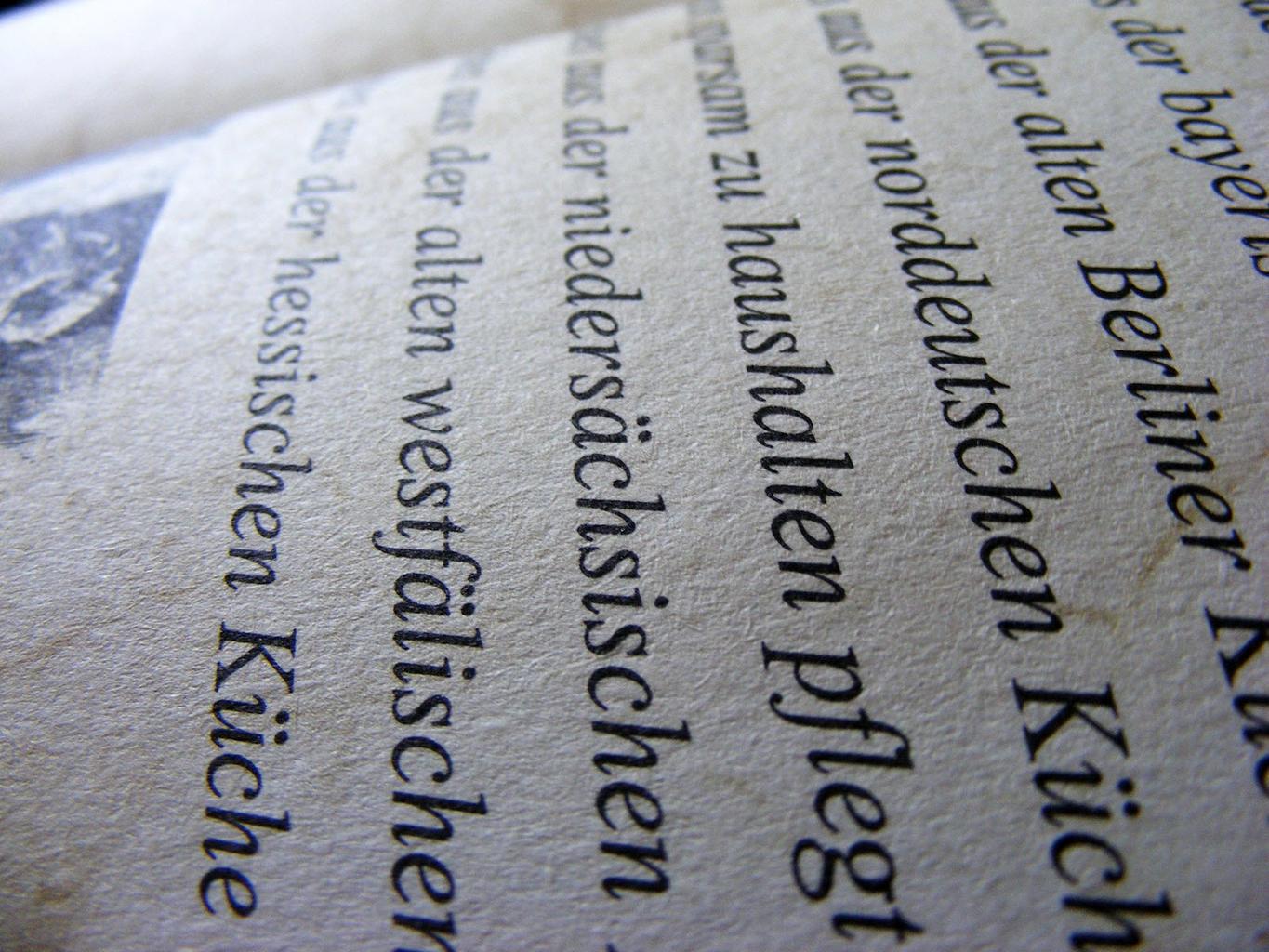

Optical Character Recognition

nopaque converts your image data – like photos or scans – into text data through OCR making it machine readable. This step enables you to proceed with further computational analysis of your documents.

Registration and Log in

Want to boost your research and get going? nopaque is free and no download is needed. Register now!

person_addRegister

Introduction video